While doing a migration of some big xslt 3 project into plain C# we run into a case that was not obvious to resolve.

Documents we process can be from a tiny to a moderate size. Being stored in xml they might take from virtually zero to, say, 10-20 MB.

In C# we may rewrite Xslt code virtually in one-to-one manner using standard features like XDocument, LINQ, regular classes, built-in collections, and so on. Clearly C# has a reacher repertoire, so task is easily solved unless you run into multiple opportunities to solve it.

The simplest solution is to use XDocument API to represent data at runtime, and use LINQ to query it. All features like xslt keys, templates, functions, xpath sequences, arrays and maps and primitive types are natuarally mapped into C# language and its APIs.

Taking several xslt transformations we could see that xslt to C# rewrite is rather straightforward and produces recognizable functional programs that have close C# source code size to their original Xslt. As a bonus C# lets you write code in asynchronous way, so C# wins in a runtime scalability, and in a design-time support.

But can you do it better in C#, especially when some data has well defined xml schemas?

The natural step, in our opinion, would be to produce C# plain object model from xml schema and use it for runtime processing.

Fortunately .NET has xml serialization attributes and tools to produce classes from xml schemas. With small efforts we have created a relevant class hierarchy for a rather big xml schema. XmlSerializer is used to convert object model to and from xml through XmlReader and XmlWriter. So, we get typed replacement of generic XDocument that still supports the same LINQ API over collections of objects, and takes less memory at runtime.

The next step would be to commit a simple test like:

-

read object model;

-

transform it;

-

write it back.

We have created such tests both for XDocument and for object model cases, and compared results from different perspectives.

Both solution produce very similar code, which is also similar to original xslt both in style and size.

Object model has static typing, which is much better to support.

But the most unexpected outcome is that object model was up to 20% slower due to serialization and deserialization even with pregenerated xmlserializer assemblies. Difference of transformation performance and memory consumption was so unnoticable that it can be neglected. These results were confirmed with multiple tests, with multiple cycles including heating up cycles.

Here we run into a case where static typing harms more than helps. Because of the nature of our processing pipeline, which is offline batch, this difference can be mapped into 10th of minutes or even more.

Thus in this particular case we decided to stay with runtime typing as a more performant way of processing in C#.

Xslt is oftentimes thought as a tool to take input xml, and run transformation to get html or some xml on output. Our use case is more complex, and is closer to a data mining of big data in batch. Our transformation pipelines often take hour or more to run even with SSD disks and with CPU cores fully loaded with work.

So, we're looking for performance opportunities, and xml vs json might be promising.

Here are our hypotheses:

- json is lighter than xml to serialize and deserialize;

- json stored as map(*), array(*) and other items() are ligher than node() at runtime, in particular subtree copy is zero cost in json;

- templates with match patterns are efficiently can be implemented with maps();

- there is incremental way forward from use of xml to use of json.

If it pays off we might be switching xml format to json all over, even though it is a development effort.

But to proceed we need to commit an experiment to measure processing speed of xml vs json in xslt.

Now our task is to find an isolated small representative sample to prove or reject our hypotheses.

Better to start off with some existing transformation, and change it from use of xml to json.

The question is whether there is such a candidate.

Couple of days ago, while integrating with someones C# library, we had to debug it, as something went wrong.

The code is big and obscure but for the integration purposes it's rather simple: you just create and call a class, that's all.

Yet, something just did not work. We had to prove that it's not our fault, as the other side is uncooperative and would not run common debug session to resolve the problem.

To simplify the matter as much as possible here is the case:

var input = ...

var x = new X();

var output = x.Execute(input);

You pass correct input, and get correct output. Simple, right? But it did not work!

So, we delved into the foreign code, and this is what we have seen:

class X: Y

{

public Output Execute(Input input)

{

return Perform(input);

}

protected override Output Run(Input input)

{

...

return output;

}

}

class Y: Z

{

...

}

class Z

{

protected Output Perform(Input input)

{

return Run(Input);

}

protected virtual Output Run(Input input)

{

return null;

}

}

Do you see, still flow is simple, right?

We call X.Execute(), it calls Z.Perform(), which in turn calls overriden X.Run() that returns the result.

But to our puzzlement we got null on output, as if Z.Run() was called!

We stepped through the code in debugger and confirmed that Z.Perform() calls Z.Run(), even though "this" instance is of type X.

How can it be? It's a nonsence! Yet, no overriden method was ever called.

No matter how much scrunity we applied to sources X and Z it just did not work.

We verified that the signature of X.Run() matches the signature of Z.Run(), so it overrides the method.

Then what do we see here?

And then enlightenment come! Yes, X.Run() overrides the method, but what method?

We looked closely at class Y, and bingo, we can see there following:

class Y: Z

{

...

protected virtual Output Run(Input input)

{

return null;

}

...

}

So, X.Run() overrides Y.Run() and not Z.Run()!

Per .NET Y.Run() and Z.Run() are two independant virtual methods, where Y.Run() in addition hides Z.Run().

IDE even issued a warning that it's better declare Y.Run() as:

protected new virtual Output Run(Input input)

{

return null;

}

So, someones code was plainly wrong: Y.Run() had to use override rather than virtual.

We won, right?

Well, it's hard to call it a win.

We spent a hour looking at someones ugly code just to prove we're still sane.

So, what is conclusion of this story?

We think here it is:

- be cautious while looking at someones code;

- look at IDE warnings, don't disregard them, and try to resolve all of them in your code base;

- try to write simple code.

Lately we work great deal of time with Azure's CosmosDB.

This is how it's defined:

"It is schema-agnostic, horizontally scalable, and generally classified as a NoSQL database."

This, unconfident in itself, quote below is clarified as:

"The SQL API lets clients create, update and delete containers and items. Items can be queried with a read-only, JSON-friendly SQL dialect."

To be honest this SQL API made us favor CosmosDB.

So, we started a development with CosmosDB as a data storage.

The next development ingredient we learned the hard way is to try to refrain from clever techniques.

The lesson we learned is simple: after you finish with a project, provided it's not a toy, you give it to people who will be supporting it. You should think about those future developers before you're going to insert some cleverness in you code.

With this common sense we selected EF Core as a library that will serve as an interface between C# and the database.

Initialy all went well until we needed to store a list of strings as a document property and found it's not possible.

Why? - was a naive question.

Answer puzzled us a lot - because string is not an "Entity" (what ever it means), and EF is about framework of entities.

You could argue with this argument as long as you like, it just does not work. It is especially bad if you need to store a class that you do not directly control e.g. structures returned from other services.

Next pothole with EF was when we tried to run an innocent query that joins the data from document: e.g. document contains items, and you want to query some items from some documents.

Guess what?

Right, EF Core does not support it.

Why?

Because!

Later we have found that many other constructs and functions that you easily use in SQL dialect of CosmosDB are not possible or supported in EF Core.

We were very upset with those crutches and came to a conclusion that EF Core harms more than helps when you work with CosmosDB.

We went on and looked at how you work directly with CosmosDB client, and have found that it has all features ready:

- you may give it SQL and bind parameters;

- you may convert result items to objects;

- you may create, delete, update and query data;

So, do we need EF Core?

We answered, no.

This does not mean we reject the value of EF Core but here our conclusion was that this API layer just complicated things instead making them simpler. It might be that EF Core for CosmosDB is not mature enough at this time.

Recently we have found that BinaryFormatter.Serialize and BinaryFormatter.Deserialize methods are marked as obsolete in .NET 5.0, and are declared dangerous:

The BinaryFormatter type is dangerous and is not recommended for data processing. Applications should stop using BinaryFormatter as soon as possible, even if they believe the data they're processing to be trustworthy. BinaryFormatter is insecure and can't be made secure.

See BinaryFormatter security guide for more details.

That guide along with its links go and expand on what problems BinaryFormatter poses. The schema of dangeous use cases, we have seen so far, is like that:

- two different sides communicate to each other;

- one side supplies input in BinaryFormatter's format;

- other side reads input using BinaryFormatter and instantiates classes.

A threat arises when two sides cannot trust to each other or cannot establish trusted communication chanel. In these cases malign input can be supplied to a side reading the data, which might lead to unexpected code execution, deny of service, data exposure and to other bad consequences.

Arguing like this, today's maintainers of .NET concluded that it's better to tear down BinaryFormatter and similar APIs out of the framework.

Note that they don't claim BinaryFormatter itself, or Reflection API that it uses, as a core of the problem. They blame on communication.

Spelling out clearly what are concerns could help to everyone to better understand how to address it.

In the area of security of communication there are a lot of ready solutions like:

- use signature to avoid tampering the data;

- use encription to avoid spying the data;

- use access rights to avoid even access to the data;

- use secure communication channels.

We can surely state that without applying these solutions no other serialization format is reliable and is subject of the same vulnerabilities.

After all it looked like an attempt to throw out the baby with the bath water. The good news is that thankfully to now modular structure of .NET runtime we're able to access binary serialization library, which are (and will be) available on nugets repositories. So, it's futile effort to erase this usefull API.

Earlier we wrote that recently we've gotten few tasks related to Machine Learning.

The prerequisites to such task is to collect and prepare the input data.

Usually the required data is scattered across public sites, some of them are in plain text format (or close to it),

but others are accessible as output of public applications. To obtain the required data for such sites

you have to navigate thourgh pages, which often requires keeping state between navigations.

In order to implement this task you need some kind of crawler/scraper of the websites.

Fortunately, there are a lot of frameworks, libraries and tools in C# (and in other languages too) that allow to do this (visit this or this site to see most popular of them), for example:

- ScrapySharp

- ABot

- HtmlAgilityPack

- DotnetSpider

There are pros and cons of using these libraries. Most crucial cons is a lack of support of rich UI based on heavy client-side scripts and client-side state support.

Since not all such libraries implement fully browser emulation and even more, some of them do not support Javascript execution.

So, they suit for gathering information from simple web pages, but no library allows to easy navigate to some page of a web application

that keeps rich client-side state. Even best of them, like ScrapySharp, require heavy programming to achieve the result.

Then, suddenly, we've recalled that already for several years we're using Selenium and web drivers to automate web tests for AngularJS/Angular projects.

After short discussion we came to conclusion that there is no big difference between testing web application and collecting data, since one of testing stages is collecting of actual results (data)

from the tested page, and usually our tests consist of chains of actions performed on consequently visited pages.

This way we came to idea to use WebDriver API implemented by Selenium project.

There are implementations of this API in different languages, and in C# too.

Using WebDriver we easily implement cumbersome navigation of a complex web application and can collect required data. Moreover, it allows to run WebDriver in screenless mode.

Some of its features allow to create a snapshots of virtual screen and store HTML sources that would resulted of Javascript execution. These features are very

useful during run-time troubleshooting. To create a complex web application navigation we need only a bit more knowledge than usual web application's user - we need

to identify somehow pages' elements for example by CSS selectors or by id of HTML elements (as we do this for tests). All the rest, like coockies, view state (if any),

value of hidden fields, some Javascript events will be transparent in this case.

Although one may say that approach with Selenium is rather fat, it's ought to mention that it is rather scalable.

You may either to run several threads with different WebDriver instances in each thread or run several processes simultaneously.

However, beside pros there are cons in the solution with Selenium. They will appear when you'll decide to publish it, e.g. to Azure environment.

Take a note that approach with Selenium requires a browser on the server, there is also a problem with Azure itself, as it's Microsoft's platform

and Selenium is a product of their main competitor Google... So, some issues aren't techincals. The only possible solution is to use PaaS approach

instead of SaaS, but in this case you have to support everything by yourself...

The other problem is that if your application will implement rather aggressive crawling, so either servers where you gather data or your own host might ban it.

So, be gentle, play nice, and implement delays between requests.

Also, take into account that when you're implementing any crawler some problems may appear on law level, since not all web sites allow pull anything you want.

Many sites use terms & conditions that defines rules for the site users (that you cralwer should follow to), otherwise legal actions may

be used against them (or their owners in case of crawler). There is

very interesting article that describes many

pitfalls when you implement your own crawler.

To summarize everything we told early, the Selenium project could be used in many scenarios, and one of them is to create a powerful crawler.

While doing Cool:GEN migratiotions to Java and C# we produce rather big Angular applications.

Everything is fine: server runs a REST APIs, and client is an Angular application with components per each window, dialog or screen. The only problem is with the word big.

We observe that enterprises that used Cool:GEN to develop their workflow come to migration stage with applications containing thousands of windows. In simple cases, after assessment, clients are able to split their monolith workflow into a set of independent applications. But even then we are dealing with Angular applications containing hundreds to many thousands components.

Now, lets look at Angular world. Best practices advice to (and actually almost force you to) use Ahead Of Time, Ivy compilation of all components and their templates.

Naive attempt to build such monolith Angular application will most surely fail. Angular uses nodejs for build, and chances are close to 100% of nodejs to run out of memory during the ng build.

You may fight and throw at it a capable build machine with 16 or better with 32GB of RAM, and instruct nodejs to use all of it.

Well, it's rather poor and extensive way of dealing with scale problems but it works.

Next hurdle you run into is time. We know it might take days just to build it.

You may ask why?

Well, angular is doing its best to validate templates!

Unfortunately the only viable workaround is to switch this nice feature off for such a big project.

With such setup you're able to build angular project in just 20-30 minutes!

Well, this is a big progress if you compare complete failure vs something that at least passes the build.

But what's next?

Sure, there are next problems:

- scripts both development and production are of nonsense size: like several dozen megabytes for production, and some even higher number for development.

ng serve eats even more memory and builds even longer making nightmare out of development and support of such an application;- startup of such application, if it will start at all, is very slow.

So, what can be done? How can we create a manageable Angular application containing that many components?

Angular advices Lazy-loading feature modules.

That's reasonable advice!

We can create coarse grained modules containing subsets of components or fine grained modules containing one component.

So, does it help?

Yes, but it does not solve all problems:

ng build and ng serve are still very slow;- build produces multiple small scripts that are loaded on demand, so at least application works in browser.

Yet, other important problem is still there: we have multiple severly separated server REST controllers with components that represent them.

On the server side we have Java or C# REST controllers hosting business logic. They are separated per multiple projects probably managed by separate teams, and probably kept in separate GITs (or whatever). On the client side we have a fat angular project storing everything kept in single source repository.

This is not going to work from management perspective.

So the next step is try to split fat Angular project into multiple small projects. So, let's say we shall have 100 small angular libraries combinded by master project.

This is not going to work either due to nature of npm projects, as it will requre terabytes of cloned node_modules folders for each library, and many hours to build each of them.

It seems there is a room for improvments in npm area. There is no point to make dedicated copies of node_modules. It's much easier to have a local cache of artifacts.

So, what is the direction? How to create big angular project after all?

What we have here is:

- a big enterprise level application;

- it is modular but modules must work together to form desired workflow;

- different modules are supported by different teams (both server and client side);

- client Angular components correspond to REST controllers on the server.

- all pages use the same styles and the same set of UI controls;

Looking from this perspective all development can be seen as:

- development and support of unified styles and ui components that must be reused through the application;

- development of server side and REST controllers that implement business logic;

- development of templates of components (note that components themselves do nothing except expose their templates).

Studying this design suggests important and independent role of templates just like it is in AngularJS!

In contrast Angular templates are only a tool used by components. It's not obvious how to use thousands of templates without first building thousands components; neither it's obvious how to dynamically host those templates (routes do not help here).

Though not obvious it's still possible to do it though it requires use a bit lower level API than tutorials suggest. Ingredients are:

- use of Just In Time (in contrast to Ahead Of Time) compilation, and use View Enginev (in contrast to Ivy);

- use ViewContainerRef to host components dynamically;

- Dynamic components and modules that you can create on demand using templates loaded e.g. through

HttpClient.

To make things short we shall show the example of dynamic components in next article.

Here we shall emphasize that such design allows us to create small angular application that builds under 20 seconds with component templates served along with the REST controllers, and stored in the same Git.

So, if you say have a server subproject exposing REST controller say in the form: api/area/MyComponent then its template may be exposed as resource: page/area/MyComponent. Templates are loaded and compiled on demand at runtime thus making application light. At the same time templates may be cached in browser cache thus reducing number of roundtrips to the server.

Eventually we've started to deal with tasks that required machine learning. Thus, the good tutorial for ML.NET was required and we had found this one that goes along with good simple codesamples. Thanks to Jeff Prosise. Hope this may be helpfull to you too. Eventually we've started to deal with tasks that required machine learning. Thus, the good tutorial for ML.NET was required and we had found this one that goes along with good simple codesamples. Thanks to Jeff Prosise. Hope this may be helpfull to you too.

Recently our colleague turned to us and asked to help to deal with some complex query.

It has turned out that the complex part was to understand what he wants to achieve.

After listening to him we have forumulated the task in our words and have confirmed that that is what he wants.

So, that's the task in our formulation:

- Assume you have events.

- Each event acts upon one or more accounts.

- Find all events that act on the same set of accounts.

- Note we deal with mutiple millions of events and accounts.

Data is defined like this:

create table dbo.Event

(

EventID bigint not null,

AccountID varchar(18) not null,

primary key(EventID, AccountID)

);

Requested query turned out to be very simple, yet, not as simple as one would think to account big amout of data:

with D as

(

select * from dbo.Event

),

S as

(

select

EventID,

count(*) Items,

checksum_agg(checksum(AccountID)) Hash

from

D

group by

EventID

)

select

S1.EventID, S2.EventID

from

S S1

inner join

S S2

on

S1.EventID < S2.EventID and

S1.Items = S2.Items and

S1.Hash = S2.Hash and

not exists

(

select AccountID from D where EventID = S1.EventID

except

select AccountID from D where EventID = S2.EventID

);

The idea is to:

- calculate a hash derived from list of accounts for each group;

- join groups with the same hash;

- verify that matched groups fit perfectly.

Even simpler solution that does not use hashes is not scaleable, as it's performance is slower than O(N^2), where N - is a number of events. It has unacceptable time with N ~1e4, nothing to say about N ~1e7.

At this point our colleague was already satisfied, as he got result in couple of minutes for a task that he could not even formalize as SQL.

But we felt it could be even better.

We looked at statistics:

with D as

(

select * from dbo.Event

),

S as

(

select

EventID,

count(*) Items

from

D

group by

EventID

)

select

Items, count(*) EventCount

from

S

group by

Items

order by

EventCount desc;

and have seen that most of the events, about 90%, deal with single account,

and all other with two and more (some of them act upon big number of accounts).

The nature of the dataset gave us a hint of more verbose but more fast query:

with D as

(

select * from dbo.Event

),

S as

(

select

EventID,

min(AccountID) AccountID,

count(*) Items,

checksum_agg(checksum(AccountID)) Hash

from

D

group by

EventID

)

select

S1.EventID, S2.EventID

from

S S1

inner join

S S2

on

S1.EventID < S2.EventID and

S1.Items = 1 and

S2.Items = 1 and

S1.AccountID = S2.AccountID

union all

select

S1.EventID, S2.EventID

from

S S1

inner join

S S2

on

S1.EventID < S2.EventID and

S1.Items > 1 and

S2.Items > 1 and

S1.Items = S2.Items and

S1.Hash = S2.Hash and

not exists

(

select AccountID from D where EventID = S1.EventID

except

select AccountID from D where EventID = S2.EventID

);

This query produced results in twenty seconds instead of couple of minutes for a dataset with ~1e7 rows.

Not sure what is use of our Xslt Graph exercises but what we are sure with is that it stresses different parts of Saxon Xslt engine and helps to find and resolve different bugs.

While implementing biconnected components algorithm we incidently run into internal error with Saxon 10.1 with rather simple xslt:

<?xml version="1.0" encoding="utf-8"?>

<xsl:stylesheet version="3.0" xmlns:xsl="http://www.w3.org/1999/XSL/Transform"

xmlns:xs="http://www.w3.org/2001/XMLSchema"

xmlns:array="http://www.w3.org/2005/xpath-functions/array"

exclude-result-prefixes="xs array">

<xsl:template match="/">

<xsl:sequence select="

array:fold-left

(

[8, 9],

(),

function($first as item(), $second as item())

{

min(($first, $second))

}

)"/>

</xsl:template>

</xsl:stylesheet>

More detail can be found at Saxon's issue tracker: Bug #4578: NullPointerException when array:fold-left|right $zero argument is an empty sequence.

Bug is promptly resolved.

While working on algorithm to trace Biconnected components for Graph API in the XSLT we realized that we implemented it unconventionally.

A pseudocode in Wikipedia is:

GetArticulationPoints(i, d)

visited[i] := true

depth[i] := d

low[i] := d

childCount := 0

isArticulation := false

for each ni in adj[i] do

if not visited[ni] then

parent[ni] := i

GetArticulationPoints(ni, d + 1)

childCount := childCount + 1

if low[ni] ≥ depth[i] then

isArticulation := true

low[i] := Min (low[i], low[ni])

else if ni ≠ parent[i] then

low[i] := Min (low[i], depth[ni])

if (parent[i] ≠ null and isArticulation) or (parent[i] = null and childCount > 1) then

Output i as articulation point

That algorithm is based on the fact that connected graph can be represented as a tree of biconnected components. Vertices of such tree are called articulation points. Implementation deals with a depth of each vertex, and with a lowpoint parameter that is also related to vertex depth during Depth-First-Search.

Out of interest we approached to the problem from different perspective. A vertex is an articulation point if it has neighbors that cannot be combined into a path not containing this vertex. As well as classical algorithm we use Depth-First-Search to navigate the graph, but in contrast we collect cycles that pass through each vertex. If during back pass of Depth-First-Search we find not cycle from "child" to "ancestor" then it is necessary an articulation point.

Here is pseudocode:

GetArticulationPoints(v, p) -> result

index = index + 1

visited[v] = index

result = index

articulation = p = null ? -1 : 0

for each n in neighbors of v except p do

if visited[n] = 0 then

nresult = GetArticulationPoints(n, v)

result = min(result, nresult)

if nresult >= visited[v] then

articulation = articulation + 1

else

result = min(result, visited[n])

if articulation > 0 then

Output v as articulation point

Algorithms' complexity are the same.

What is interesting is that we see no obvious way to transform one algorithm into the other except from starting from Graph theory.

More is on Wiki.

Michael Key's "A Proposal for XSLT 4.0" has spinned our interest in what could be added or changed in XSLT. This way we decided to implement Graph API purely in xslt. Our goal was to prove that:

- it's possible to provide efficient implementation of different Graph Algorithms in XSLT;

- to build Graph API the way that engine could provide native implementations of Grahp Algorithms.

- to find through an experiments what could be added to XSLT as a language.

At present we may confirm that first two goals are reachable; and experiments have shown that XSLT could provide more help to make program better, e.g. we have seen that language could simplify coding cycles.

Graph algorithms are often expressed with while cycles, e.g "Dijkstra's algorithm" has:

12 while Q is not empty:

13 u ← vertex in Q with min dist[u]

body is executed when condition is satisfied, but condition is impacted by body itself.

In xslt 3.0 we did this with simple recursion:

<xsl:template name="f:while" as="item()*">

<xsl:param name="condition" as="function(item()*) as xs:boolean"/>

<xsl:param name="action" as="function(item()*) as item()*"/>

<xsl:param name="next" as="function(item()*, item()*) as item()*"/>

<xsl:param name="state" as="item()*"/>

<xsl:if test="$condition($state)">

<xsl:variable name="items" as="item()*" select="$action($state)"/>

<xsl:sequence select="$items"/>

<xsl:call-template name="f:while">

<xsl:with-param name="condition" select="$condition"/>

<xsl:with-param name="action" select="$action"/>

<xsl:with-param name="next" select="$next"/>

<xsl:with-param name="state" select="$next($state, $items)"/>

</xsl:call-template>

</xsl:if>

</xsl:template>

But here is the point. It could be done in more comprehended way. E.g. to let xsl:iterate without select to cycle until xsl:break is reached.

<xsl:iterate>

<xsl:param name="name" as="..." value="..."/>

<xsl:if test="...">

<xsl:break/>

</xsl:if>

...

</xsl:iterate>

So, what we propose is to let xsl:iterate/@select to be optional, and change the behavior of processor when the attribute is missing from compilation error to a valid behavior.

This should not impact on any existing valid XSLT 3.0 program.

Recently we've read an article

"A Proposal for XSLT 4.0", and thought it worth to suggest one more idea. We have written a message to Michael Kay, author of this proposal. Here it is:

A&V

Historically xslt, xquery and xpath were dealing with trees. Nowadays it became much common to process graphs. Many tasks can be formulated in terms of graphs, and in particular any task processing trees is also graph task.

I suggest to take a deeper look in this direction.

As an inspiration I may suggest to look at "P1709R2: Graph Library" - the C++ proposal.

Michael Kay

I have for many years found it frustrating that XML is confined to hierarchic relationships (things like IDREF and XLink are clumsy workarounds); also the fact that the arbitrary division of data into "documents" plays such a decisive role: documents should only exist in the serialized representation of the model, not in the model itself.

I started my career working with the Codasyl-defined network data model. It's a fine and very flexible data model; its downfall was the (DOM-like) procedural navigation language. So I've often wondered what one could do trying to re-invent the Codasyl model in a more modern idiom, coupling it with an XPath-like declarative access language extended to handle networks (graphs) rather than hierarchies.

I've no idea how close a reinventiion of Codasyl would be to some of the modern graph data models; it would be interesting to see. The other interesting aspect of this is whether you can make it work for schema-less data.

But I don't think that would be an incremental evolution of XSLT; I think it would be something completely new.

A&V

I was not so radical in my thoughts.

Even C++ API is not so radical, as they do not impose hard requirements on internal graph representation but rather define template API that will work both with third party representations (they even mention Fortran) or several built-in implementations that uses standard vectors.

Their strong point is in algorithms provided as part of library and not graph internal structure (I think authors of that paper have structured it not the best way). E.g. in the second part they list graph algorithms: Depth First Search (DFS); Breadth First Search (BFS); Topological Sort (TopoSort); Shortest Paths Algorithms; Dijkstra Algorithms; and so on.

If we shall try to map it to xpath world them graph on API level might be represented as a user function or as a map of user functions.

On a storage level user may implement graph using a sequence of maps or map of maps, or even using xdm elements.

So, my approach is evolutional. In fact I suggest pure API that could even be implemented now.

Michael Kay

Yes, there's certainly scope for graph-oriented functions such as closure($origin, $function) and is-reachable($origin, $function) and find-path($origin, $destination, $function) where we use the existing data model, treating any item as a node in a graph, and

representing the arcs using functions. There are a few complications, e.g. what's the identity comparison between arbitrary items, but it can probably be done.

A&V

> There are a few complications, e.g. what's the identity comparison between arbitrary items, but it can probably be done.

One approach to address this is through definition of graph API. E.g. to define graph as a map (interface analogy) of functions, with equality functions, if required:

map

{

vertices: function(),

edges: function(),

value: function(vertex),

in-vertex: function(edge),

out-vertex: function(edge),

edges: function(vertex),

is-in-vertex: function(edge, vertex),

is-out-vertex: function(edge, vertex)

...

}

Not sure how far this will go but who knows.

It's not a secret that COVID-19 epidemic will change our world significantly.

It impacts on economics and public services, especially on social services of our countries.

We saw this in our country and now the same happens in US.

Probably the same thing happens in all countries all over the world that suffer from COVID-19. It impacts on economics and public services, especially on social services of our countries.

We saw this in our country and now the same happens in US.

Probably the same thing happens in all countries all over the world that suffer from COVID-19.

Usually, nowadays, such services are exposed online, but nobody expected such extreme loading of these services.

And they start molder under such load. Programs start crash... and somebody has to fix them.

It's just a temporary technical inconvenience when there is staff that familiar with such programs and technologies,

but what about situation when programs and technologies are obsolete?

When staff that may support them are about to retire due to ages, when knowledge were almost lost...

It's very scary when such applications rules very important spheres of our life such social services, finances, medicine, defence etc.

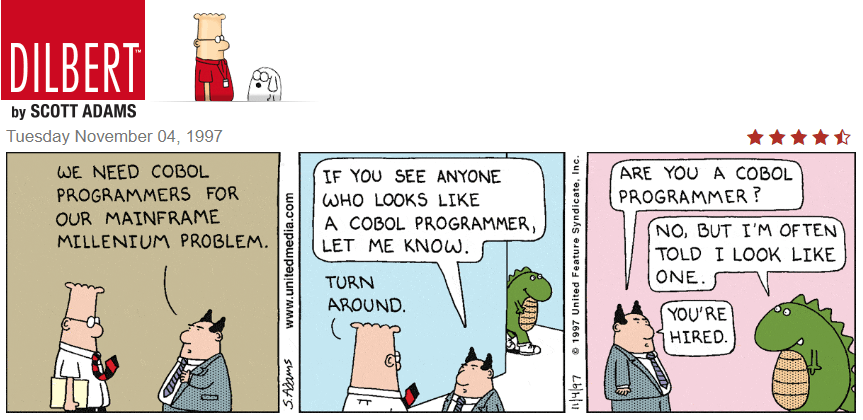

Something similar happened in US, so US government asked IBM about a help with their stuff written in COBOL.

Probably, in short term, they'll close the gaps, but taking in account the fact that epidemic won't dissolve in a month,

there is a risk to still in the same hole... There are two ways to solve this issue in long term:

- to make COBOL widely used program language and to teach enough programmers that will use it. This is exactly what IBM tries to do,

see this article,

but this way to nowhere, since it is not too popular in society of software developers.

- to migrate such application to new technologies and new platform (e.g. Java or C# on UNIX/Windows). In this case organizations obtain

scalable applications and ability to find human resources that may fix/modernize such applications step by step, in spare time,

without loosing existing functionality. This is what our company Advanced may provide.

And we are not alone. There are many such companies that may implement such migration on high level of quality.

And many professionals (even those that deal with COBOL on day by day basis) think that only 2nd way is viable. Let's see what will happen...

More about the issue, see here.

People compare these two technologies, and it seems an established fact is that Angular is evolutionally more advanced framework. We're not going to contradict, contrary, we agree with it, but it's better for an opinion to be grounded on facts that one can evaluate and verify.

Fortunately we got a chance to make such a comparison.

We support conversions of Cool:GEN (a legacy CASE tool with roots in 80th) to java or C#. In its time Cool:GEN allowed to greatly automate enterprise development using Mainframes as a server side and Mainframe terminals or Win32 GUIs as clients.

The legacy of this tool are probably hundreds of business and database models, milions of programs generated on COBOL on Mainframes and on C or Java on Windows and Linux. All this runs to this time in many econimic sectors.

Usually the client is some enterprise that invested a lot into design, development and support of their business model using Cool:GEN but now most such clients a trying not to lose this legacy but to convert it into something that goes in parallel with todays technologies.

As original technology is sound, so it is possible to map it to todays Java or C# on server, REST or SOAP as a transport, and Angular, AngularJS or some other on client. Such automatic conversion is an essense of our conversions efforts.

To understand a scope consider a typical enterprise client that has 2-3 thousand windows that are backed by 20-30 thousand programs.

Now, consider that the conversion is done. On output among other things we produce a clean java or C# web application with REST and SOAP interface, and Angular or AngularJS web client that encapsulates those 2-3 thousand windows.

Each window definition is rather small 5-10 KB in html form, but whole mass of windows takes 10-30 MB, which is not small any more.

For AngularJS we generate just those html templates, but for Angular we need to generate separate components for each window that includes typescript class, template and style.

While amout of generated resource for AngularJS stays in those 10-30 MB, generated Angular takes at least 5-10 MB more.

The next step is build.

AngularJS builds distribution that includes all used libraries and a set of templates, and it takes something like a minute from the CPU. Produced output is about 300 KB minified script and those 10-30 MB of templates (multiple files with 5-10 KB each one).

Angular (here we talk about version 9) builds distribution that includes all used libraries and a set of compiled components that are to be loaded lazily on demand. Without of the both angular builder that performs tree shaking build takes days. With tree shaking off it takes 40 minutes. This is the first notable difference. Produced output for ES2015 (latest javascript) is about 1 MB, and 15-100 KB per each compiled component. This is the second notable difference that already impacts end user rather than developer.

The third difference is in the end user experience. Though we have built equalvalent Angular and AngularJS frontend we observe load time of angular is higher. This cannot only be ascribed to bigger file sizes. It seems internal initialization also takes more time for Angular.

So, our experience in this particular test shows that Angular has more room to improve. In particular: compile time, bundle size, runtime speed and simplicity of dynamic loading (we have strong cases when template compilation is not the best approach).

|