Back in 2006 and 2007 we have defined dbo.Numbers function:

Numbers table in SQL Server 2005,

Parade of numbers. Such construct is very important in a set based

programming. E.g. XPath 2 contains a range expression like this: "1 to 10" to

return a sequence of numbers.

Unfortunately neither SQL Server 2008 R2, nor SQL Server 2012 support such

construct, so dbo.Numbers function is still actual.

After all these years the function evolved a little bit to achieve a better

performance. Here is its source:

-- Returns numbers table.

-- Table has a following structure: table(value int not null);

-- value is an integer number that contains numbers from 1 to a specified value.

create function dbo.Numbers

(

-- Number of rows to return.

@count int

)

returns table

as

return

with Number8 as

(

select

*

from

(

values

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0),

(0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0), (0)

) N(Value)

),

Number32(Value) as

(

select

0

from

Number8 N1

left join

Number8 N2

on

@count > 0x100

left join

Number8 N3

left join

Number8 N4

on

@count > 0x1000000

on

@count > 0x10000

)

select top(@count) row_number() over(order by @count) Value from Number32;

We're working with an online database, which is ever populated with a new

data. Database activity is mostly around recent data. Activity against older

data declines with increasing the distance from today. The ratio of an amount of a

new data, say for a last month, to the whole data, at present stays at

~1%. The size of database is measured in TBs.

While we're developers and not DBA's, you will see from

a later blog

posts why we're bothered with the database size. In short we're planning to

change some UDF type from smallint to int. This will impact

on many tables, and the task now is to estimate that impact.

Our first attempts to measure the difference between table sizes before and

after type change showed that a data fragmentation often masks the difference, so

we started to look at a way to reduce fragmentation.

Internet is full with recomentations. An advice can be found in BOL at

Reorganize

and Rebuild Indexes.

So, our best help in this task is the function sys.dm_db_index_physical_stats,

which reports statistics about fragmentation.

Analysing what that function has given to us we could see that we had a highly

fragmented data. There was no reason to bear with that taking into an account that

the most of the data stored in the database is historical, which is rarely

accessed and even more rarely updated.

The next simplest instument adviced is:

alter index { index_name | ALL } on <object> reorganize [ PARTITION = partition_number ];

The less trivial but often more efficient instrument is the use of online index

rebuild and index reorganize depending on index type and a level of

fragmentation.

All in all our estimation is that rebuilding or reorganizing indices frees

~100-200GBs of disk space. While, it's only a small percent of total database

size, it gives us several monthes worth of a disk space!

Earlier we overlooked SQL Server API to monitor fragmentation, rebuild, and

reorganize indices, and now we're going to create a job that will regulary

defragment the database.

We have a large table in the form:

create table dbo.Data

(

Date date not null,

Type int not null,

Value nvarchar(50) null,

primary key clustered(Date, Type)

);

create unique nonclustered index IX_Data on dbo.Data(Type, Date);

Among other queries we often need a snapshot of data per each Type for a latest

Date available:

select

max(Date) Date,

Type

from

dbo.Data

group by

Type

We have

found that the above select does not run well on our data set. In fact dbo.Data

grows with time, while snapshot we need stays more or less of the same size. The

best solution to such query is to precalculate it. One way would be to create an

indexed view, but SQL Server does not support max() aggregate in indexed views.

So, we have decided to add additional bit field dbo.Data.Last indicating that

a row belongs to a last date snapshot, and to create filtered index to access

that snapshot:

create table dbo.Data

(

Date date not null,

Type int not null,

Value nvarchar(50) null,

Last bit not null default 0,

primary key clustered(Date, Type)

);

create unique nonclustered index IX_Data on dbo.Data(Type, Date);

create unique nonclustered index IX_Data_Last on dbo.Data(Type)

include(Date)

where Last = 1;

One way to support Last indicator is to create a trigger that will adjust Last

value:

create trigger dbo.Data_Update on dbo.Data

after insert,delete,update

as

begin

if (trigger_nestlevel(@@procid) < 2)

begin

set nocount on;

with

D as

(

select Date, Type from deleted

union

select Date, Type from inserted

),

U as

(

select

V.Date, V.Type

from

D

inner join

dbo.Data V

on

(V.Last = 1) and

(V.Type = D.Type)

union

select

max(V.Date) Date,

V.Type

from

D

inner join

dbo.Data V

on

V.Type = D.Type

group by

V.Type

),

V as

(

select

rank()

over(partition by

V.Type

order by

V.Date desc) Row,

V.*

from

dbo.Data V

inner join

U

on

(V.Date = U.Date) and

(V.Type = U.Type)

)

update V

set

Last = 1 - cast(Row - 1 as bit);

end;

end;

With Last indicator in action, our original query has been transformed to:

select Date, Type

from dbo.Data where Last = 1

Execution plan shows that a new filtered index

IX_Data_Last is used. Execution speed has increased considerably.

As our actual table contains other bit fields, so Last

indicator did not

increase the table size, as SQL Server packs each 8 bit fields in one byte.

Earlier we have shown

how to build streaming xml reader from business data and have reminded about

ForwardXPathNavigator which helps to create

a streaming xslt transformation. Now we want to show how to stream content

produced with xslt out of WCF service.

To achieve streaming in WCF one needs:

1. To configure service to use streaming. Description on how to do this can be

found in the internet. See web.config of the sample

Streaming.zip for the details.

2. Create a service with a method returning Stream:

[ServiceContract(Namespace = "http://www.nesterovsky-bros.com")]

[AspNetCompatibilityRequirements(RequirementsMode = AspNetCompatibilityRequirementsMode.Allowed)]

public class Service

{

[OperationContract]

[WebGet(RequestFormat = WebMessageFormat.Json)]

public Stream GetPeopleHtml(int count,

int seed)

{

...

}

}

2. Return a Stream from xsl transformation.

Unfortunately (we mentioned it already), XslCompiledTransform generates its

output into XmlWriter (or into output Stream) rather than exposes result as

XmlReader, while WCF gets input stream and passes it to a client.

We could generate xslt output into a file or a memory Stream and then return

that content as input Stream, but this will defeat a goal of streaming, as

client would have started to get data no earlier that the xslt completed its

work. What we need instead is a pipe that form xslt output Stream to an input

Stream returned from WCF.

.NET implements pipe streams, so our task is trivial.

We have defined a utility method that creates an input Stream from a generator

populating an output Stream:

public static Stream GetPipedStream(Action<Stream> generator)

{

var output = new AnonymousPipeServerStream();

var input = new AnonymousPipeClientStream(

output.GetClientHandleAsString());

Task.Factory.StartNew(

() =>

{

using(output)

{

generator(output);

output.WaitForPipeDrain();

}

},

TaskCreationOptions.LongRunning);

return input;

}

We wrapped xsl transformation as such a generator:

[OperationContract]

[WebGet(RequestFormat = WebMessageFormat.Json)]

public Stream GetPeopleHtml(int count, int seed)

{

var context = WebOperationContext.Current;

context.OutgoingResponse.ContentType = "text/html";

context.OutgoingResponse.Headers["Content-Disposition"] =

"attachment;filename=reports.html";

var cache = HttpRuntime.Cache;

var path = HttpContext.Current.Server.MapPath("~/People.xslt");

var transform = cache[path] as XslCompiledTransform;

if (transform == null)

{

transform = new XslCompiledTransform();

transform.Load(path);

cache.Insert(path, transform, new CacheDependency(path));

}

return Extensions.GetPipedStream(

output =>

{

// We have a streamed business data.

var people = Data.CreateRandomData(count, seed, 0, count);

// We want to see it as streamed xml data.

using(var stream =

people.ToXmlStream("people", "http://www.nesterovsky-bros.com"))

using(var reader = XmlReader.Create(stream))

{

// XPath forward navigator is used as an input source.

transform.Transform(

new ForwardXPathNavigator(reader),

new XsltArgumentList(),

output);

}

});

}

This way we have build a code that streams data directly from business data to a

client in a form of report. A set of utility functions and classes helped us to

overcome .NET's limitations and to build simple code that one can easily

support.

The sources can be found at

Streaming.zip.

In the previous

post about streaming we have dropped at the point where we have XmlReader

in hands, which continously gets data from IEnumerable<Person>

source.

Now we shall remind about ForwardXPathNavigator - a class we have built

back in 2002, which adds streaming transformations to .NET's xslt processor.

While XslCompiledTransform is desperately obsolete, and no upgrade

will possibly follow; still it's among the fastest xslt 1.0 processors. With

ForwardXPathNavigator we add ability to transform input data of arbitrary size to this processor.

We find it interesting that

xslt 3.0 Working Draft defines streaming processing in a way that closely

matches rules for ForwardXPathNavigator:

Streaming achieves two important objectives: it allows large documents to be transformed

without requiring correspondingly large amounts of memory; and it allows the processor

to start producing output before it has finished receiving its input, thus reducing

latency.

The rules for streamability, which are defined in detail in 19.3 Streamability

Analysis, impose two main constraints:

-

The only nodes reachable from the node that is currently being processed are its

attributes and namespaces, its ancestors and their attributes and namespaces, and

its descendants and their attributes and namespaces. The siblings of the node, and

the siblings of its ancestors, are not reachable in the tree, and any attempt to

use their values is a static error. However, constructs (for example, simple forms

of xsl:number, and simple positional patterns) that require knowledge

of the number of preceding elements by name are permitted.

-

When processing a given node in the tree, each descendant node can only be visited

once. Essentially this allows two styles of processing: either visit each of the

children once, and then process that child with the same restrictions applied; or

process all the descendants in a single pass, in which case it is not possible while

processing a descendant to make any further downward selection.

The only significant difference between ForwardXPathNavigator and

xlst 3.0 streaming is in that we reported violations of rules for streamability

at runtime, while xslt 3.0 attempts to perform this analysis at compile time.

Here the C# code for the xslt streamed transformation:

var transform = new XslCompiledTransform();

transform.Load("People.xslt");

// We have a streamed business data.

var people = Data.CreateRandomData(10000, 0, 0, 10000);

// We want to see it as streamed xml data.

using(var stream =

people.ToXmlStream("people", "http://www.nesterovsky-bros.com"))

using(var reader = XmlReader.Create(stream))

using(var output = File.Create("people.html"))

{

// XPath forward navigator is used as an input source.

transform.Transform(

new ForwardXPathNavigator(reader),

new XsltArgumentList(),

output);

}

Notice how XmlReader is wrapped into ForwardXPathNavigator.

To complete the picture we need xslt that follows the streaming rules:

<xsl:stylesheet version="1.0" xmlns:xsl="http://www.w3.org/1999/XSL/Transform"

xmlns:msxsl="urn:schemas-microsoft-com:xslt"

xmlns:d="http://www.nesterovsky-bros.com"

exclude-result-prefixes="msxsl d">

<xsl:output method="html" indent="yes"/>

<!-- Root template processed in the streaming mode. -->

<xsl:template match="/d:people">

<html>

<head>

<title>List of persons</title>

<style type="text/css">

.even

{

}

.odd

{

background: #d0d0d0;

}

</style>

</head>

<body>

<table border="1">

<tr>

<th>ID</th>

<th>First name</th>

<th>Last name</th>

<th>City</th>

<th>Title</th>

<th>Age</th>

</tr>

<xsl:for-each select="d:person">

<!--

Get element snapshot.

A

snapshot allows arbitrary access to the element's content.

-->

<xsl:variable name="person">

<xsl:copy-of select="."/>

</xsl:variable>

<xsl:variable name="position" select="position()"/>

<xsl:apply-templates mode="snapshot" select="msxsl:node-set($person)/d:person">

<xsl:with-param name="position" select="$position"/>

</xsl:apply-templates>

</xsl:for-each>

</table>

</body>

</html>

</xsl:template>

<xsl:template mode="snapshot" match="d:person">

<xsl:param name="position"/>

<tr>

<xsl:attribute name="class">

<xsl:choose>

<xsl:when test="$position mod 2 = 1">

<xsl:text>odd</xsl:text>

</xsl:when>

<xsl:otherwise>

<xsl:text>even</xsl:text>

</xsl:otherwise>

</xsl:choose>

</xsl:attribute>

<td>

<xsl:value-of select="d:Id"/>

</td>

<td>

<xsl:value-of select="d:FirstName"/>

</td>

<td>

<xsl:value-of select="d:LastName"/>

</td>

<td>

<xsl:value-of select="d:City"/>

</td>

<td>

<xsl:value-of select="d:Title"/>

</td>

<td>

<xsl:value-of select="d:Age"/>

</td>

</tr>

</xsl:template>

</xsl:stylesheet>

So, we have started with a streamed entity data, proceeded to the streamed

XmlReader and reached to the streamed xslt transformation.

But at the final post about streaming we shall remind a simple way of building

WCF service returning html stream from our xslt transformation.

The sources can be found at

Streaming.zip.

If you're using .NET's IDictionary<K, V> you have probably found

its access API too boring. Indeed at each access point you have to write a code

like this:

MyValueType value;

var hasValue = dictionary.TryGetValue(key, out value);

...

In many, if not in most, cases the value is of a reference type, and you do not

usually store null values, so it would be fine if dictionary

returned null when value does not exist for the key.

To deal with this small nuisance we have declared a couple of accessor

extension methods:

public static class Extensions

{

public static V Get<K, V>(this IDictionary<K, V> dictionary, K key)

where V: class

{

V value;

if (key == null)

{

value = null;

}

else

{

dictionary.TryGetValue(key, out value);

}

return value;

}

public static V Get<K, V>(this IDictionary<K, V> dictionary, K? key)

where V: class

where K: struct

{

V value;

if (key == null)

{

value = null;

}

else

{

dictionary.TryGetValue(key.GetValueOrDefault(), out value);

}

return value;

}

}

These methods simplify dictionary access to:

var value = dictionary.Get(key);

...

For some reason neither .NET's XmlSerializer nor DataContractSerializer allow

reading data through an XmlReader. These APIs work other way round writing data

into an XmlWriter. To get data through XmlReader one needs to write it to some

destination like a file or memory stream, and then to read it using XmlReader.

This complicates streaming design considerably.

In fact the very same happens with other .NET APIs.

We think the reason of why .NET designers preferred XmlWriter to XmlReader in

those APIs is that XmlReader's implementation is a state machine like, while

XmlWriter's implementation looks like a regular procedure. It's much harder to

manually write and to support a correct state machine logic

than a procedure.

If history would have gone slightly

different way, and if yield return, lambda, and Enumerator API appeared before

XmlReader, and XmlWriter then, we think, both these classes looked differently.

Xml source would have been described with a IEnumerable<XmlEvent> instead of

XmlReader, and XmlWriter must be looked like a function receiving

IEnumerable<XmlEvent>. Implementing XmlReader would have meant a creating a

enumerator. Yield return and Enumerable API would have helped to implement it in

a procedural way.

But in our present we have to deal with the fact that DataContractSerializer

should write the data into XmlWriter, so let's assume we have a project that

uses Entity Framework to access the database, and that you have a data class

Person, and data access method GetPeople():

[DataContract(Name = "person", Namespace = "http://www.nesterovsky-bros.com")]

public class Person

{

[DataMember] public int Id { get; set; }

[DataMember] public string FirstName { get; set; }

[DataMember] public string LastName { get; set; }

[DataMember] public string City { get; set; }

[DataMember] public string Title { get; set; }

[DataMember] public DateTime BirthDate { get; set; }

[DataMember] public int Age { get; set; }

}

public static IEnumerable<Person> GetPeople() { ... }

And your goal is to expose result of GetPeople() as XmlReader.

We achieve result with three simple steps:

- Define

JoinedStream - an input Stream implementation that

reads data from a enumeration of streams (IEnumerable<Stream>).

- Build xml parts in the form of

IEnumerable<Stream>.

- Combine parts into final xml stream.

The code is rather simple, so here we qoute its essential part:

public static class Extensions

{

public static Stream JoinStreams(this IEnumerable<Stream> streams, bool closeStreams = true)

{

return new JoinedStream(streams, closeStreams);

}

public static Stream ToXmlStream<T>(

this IEnumerable<T> items,

string rootName = null,

string rootNamespace = null)

{

return items.ToXmlStreamParts<T>(rootName, rootNamespace).

JoinStreams(false);

}

private static IEnumerable<Stream> ToXmlStreamParts<T>(

this IEnumerable<T> items,

string rootName = null,

string rootNamespace = null)

{

if (rootName == null)

{

rootName = "ArrayOfItems";

}

if (rootNamespace == null)

{

rootNamespace = "";

}

var serializer = new DataContractSerializer(typeof(T));

var stream = new MemoryStream();

var writer = XmlDictionaryWriter.CreateTextWriter(stream);

writer.WriteStartDocument();

writer.WriteStartElement(rootName, rootNamespace);

writer.WriteXmlnsAttribute("s", XmlSchema.Namespace);

writer.WriteXmlnsAttribute("i", XmlSchema.InstanceNamespace);

foreach(var item in items)

{

serializer.WriteObject(writer, item);

writer.WriteString(" ");

writer.Flush();

stream.Position = 0;

yield return stream;

stream.Position = 0;

stream.SetLength(0);

}

writer.WriteEndElement();

writer.WriteEndDocument();

writer.Flush();

stream.Position = 0;

yield return stream;

}

private class JoinedStream: Stream

{

public JoinedStream(IEnumerable<Stream> streams, bool closeStreams = true)

...

}

}

The use is even more simple:

// We have a streamed business data.

var people = GetPeople();

// We want to see it as streamed xml data.

using(var stream = people.ToXmlStream("persons", "http://www.nesterovsky-bros.com"))

using(var reader = XmlReader.Create(stream))

{

...

}

We have packed the sample into the project

Streaming.zip.

In the next post we're going to remind about streaming processing in xslt.

For some reason KendoUI DataSource does not allow to access current ajax

request. Indeed, it seems quite natural to have a way to cancel running request.

To achieve a desired effect we have made a small

set of changes in the

RemoteTransport class:

var RemoteTransport_setup = kendo.RemoteTransport.fn.setup;

kendo.RemoteTransport.fn.setup = function()

{

var that = this,

options = RemoteTransport_setup.apply(that,

arguments),

beforeSend = options.beforeSend;

options.beforeSend = functions(request, options)

{

that.abort();

that._request = request;

if (beforeSend && (beforeSend.apply(this, arguments) === false))

{

that._request = null;

return false;

}

request.always(function() { that._request = null; });

}

return options;

}

kendo.RemoteTransport.fn.request = function()

{

return this._request;

}

kendo.RemoteTransport.fn.abort = function()

{

var request = this._request;

if (request)

{

this._request = null;

request.abort();

}

}

These changes allow to get an ajax request instance:

grid.dataSource.request(), or to cancel a request grid.dataSource.abort().

We're pleased to work with Kendo UI. Its design is good, however we find here

and there things we would wish be done better. Here is a list of problems in a

no particular order we

would like to be addressed in the next release:

- RTL is not supported (including correct scroll bar position see

Tunning KendoUI).

- Templates and binding should support a context information along with the data

source. (Why do they use

with statement?)

- attr binding should use jquery.attr() method; there should be prop binding

which is analogous to attr binding.

- There should be custom binding that allows any json object to bind to different

aspects of a widget or an element.

- One should be able to use format/parse functions during binding. (Allow

binding to express as a triple json object?)

parseExact(value, format, culture) method should be rewritten, as it has

nothing in common with parsing data string according to exact format.- Type inference during binding is poor (

parseOption() method). It works neither for string "1,2", nor json " { x: 0 } ", nor for date.

- Binding is not implemented for many components: splitter, grid.

- Splitter's pane should support size="auto".

- Drid does not support totals in group headers, nor it supports header

selection.

- DataSource does not works after remote error, neither it allows to cancel

request.

- innerHtml is used all over the code, thus one cannot rely on jquery.data().

- Grid does not support customization (localization) of a column filter.

- Grid should support data binding of its content.

- One should be able to destroy any widget.

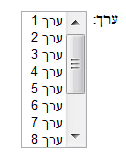

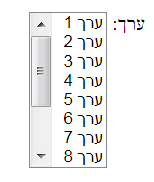

Trying to make KendoUI to work with Hebrew or more generally in RTL environment

we had to find a way to guess the position of scroll bar when direction is rtl.

The problem exists due to the fact that some browsers (Chrome one of them) always

put scroll bars to the right. That's utterly wrong. Consider a label and a listbox:

|

Chrome

|

IE

|

|

|

You can see that the scroll bar appears between the label (on the right) and the

data in the list box (on the left) in Chrome, and on the left side of the list

box in the IE.

We came up with the following test that calculates a scroll bar position in rtl

mode:

<script type="text/javascript">

var _scrollbar;

function scrollbar()

{

if (!_scrollbar)

{

var div = document.createElement("div");

div.style.cssText = "overflow:scroll;zoom:1;clear:both;direction:rtl";

div.innerHTML = "<div> </div>";

document.body.appendChild(div);

_scrollbar =

{

size: div.offsetWidth - div.scrollWidth,

rtlPosition: div.offsetLeft < div.firstChild.offsetLeft

? "left" : "right"

};

document.body.removeChild(div);

}

return _scrollbar;

}

</script>

In conjuction with an approach described in

How to create a <style> tag with Javascript we were able to define

rtl css classes for kendo controls and in particular for the grid, combobox, dropdownlist, and datepicker.

Some time ago we were taking a part in a project where 95% of all sources are xslt

2.0. It was a great experience for us.

The interesting part is that we used xslt in areas we would never expect

it in

early 2000s. It crunched gigabytes of data in offline, while earlier we

generally sought xslt application in a browser or on a server as an engine to render the data.

Web applications (both .NET and java) are in our focus today, and it became hard

to find application for xslt or xquery.

Indeed, client side now have a very strong APIs: jquery, jqueryui, jsview,

jqgrid, kendoui, and so on. These libraries, and today's browsers cover

developer's needs in building managable applications. In contrast, a native

support of xslt (at least v2) does not exist in browsers.

Server side at present is seen as a set of web services. These services

support both xml and json formats, and implement a business logic only. It

would be a torture to try to write such a frontend in xslt/xquery. A server

logic itself is often dealing with a diversity of data sources like databases,

files (including xml files) and other.

As for a database (we primarily work with SQL Server 2008 R2), we think that all

communication should go through stored procedures, which implement all data

logic. Clearly, this place is not for xslt. However, those who know sql beyond

its basics can confirm that sql is very similar to xquery. More than that SQL

Server (and other databases) integrate xquery to work with xml data, and we do

use it extensively.

Server logic itself uses API like LINQ to manipulate with different data

sources. In fact, we think that one can build a compiler from xquery 3.0 to C#

with LINQ. Other way round compiler would be a whole different story.

The net result is that we see little place for xslt and xquery. Well, after all it's only a personal perspective on the subject.

The similar type of thing has happened to us with C++. As with xslt/xquery we

love C++ very much, and we fond of C++11, but at present we have no place in our

current projects for C++. That's pitty.

P.S. Among other things that play against xslt/xquery is that there is a shortage of people who know these languages, thus who can support such projects.

Several days ago we've arrived to the blog "Recursive

lambda expressions". There, author asks how to write a lambda expression

that calculates a factorial (only expression statements are allowed).

The problem by itself is rather artificial, but at times you feel an intellectual

pleasure solving such tasks by yourself. So, putting original blog post aside we

devised our answers. The shortest one goes like this:

- As C# lambda expression cannot refer to itself, so it have to receive itself as

a parameter, so:

factorial(factorial, n) = n <= 1 ? 1 : n * factorial(factorial, n - 1);

- To define such lambda expression we have to declare a delegate type that receives

a delegate of the same type:

delegate int Impl(Impl impl, int n);

Fortunately, C# allows this, but a workaround could be used even if it were not

possible.

- To simplify the reasoning we've defined a two-expression version:

Impl impl = (f, n) => n <= 1 ? 1 : n * f(f, n - 1);

Func<int, int> factorial = i => impl(impl, i);

- Finally, we've written out a one-expression version:

Func<int, int> factorial = i => ((Func<Impl,

int>)(f => f(f, i)))((f, n) => n <= 1 ? 1 : n * f(f, n - 1));

- The use is:

var f = factorial(10);

After that excercise we've returned back to original blog and compared

solutions.

We can see that author appeals to a set theory but for some reason his answer is

more complex than nesessary, but comments contain variants that analogous to our

answer.

Let's start from a distance.

We support a busy database for a customer. Customer's requirement (in fact,

state's requirement) is that the database should have audit logs. This

means that all important requests should be logged. These logs help both for the

offline security analysis, and for the database health monitoring.

Before the end of the last year we used SQL Server 2005, and then customer

has upgraded to SQL Server 2008 R2.

As

by design the database is accessed through Stored Procedures only, so the logging was done

using

a small SP that traced input parameters and execution time. The call to that SP

was inserted throughout the code of other SPs.

We expected SQL Server 2008 R2

to simplify the task, and to allow us to switch

the audit on and off on a fine grained level without the need to change a SP in

the production (see

Understanding

SQL Server Audit for details).

Unfortunatelly, we have almost immediately found that

the current audit implementation traces SP calls but does not store parameter values. This way, you can see that

there was a call "execute X @param1, @param2", but you

have no idea what values were passed. Internet search shows that this a known problem (see

SQL Server 2008 Database Audit on INSERT UPDATE and DELETE actual SQL and not

parameter values), which renders SQL Server Audit useless.

But nevertheless, looking at how can we simplify our hand-made audit we have found a

brilliant solution: "Light

weight SQL Server procedure auditing without using SQL Server auditing".

It's so simple, that it's a shame that we did not invent it ourselves!

The approach is to insert or remove tracing code automatically. Indeed, there is

nothing but data in the database, even the text of SP is only a data.

To automate it even more, we have defined a small table with names of procedures

and their log levels, and have defined a procedure "Log.SetLevel @level" to configure

all logging in one go. In addition we have simplified logging procedures and

tables, and started to store parameters in xml columns rather than in a

pipe-concatenated strings.

Now, to the negative SP execution times.

The logging code among other things measures current_timestamp at

the begin and at the end of the execution of SP. This helps us (as developers)

to monitor how database performs on a day to day basis, and to build many useful

statistics.

For example we can see that the duration of about 10% of untrivial selects is

0ms (execution time is under 1ms). This means that SQL Server is good at

data caching. But what is most interesting is that about 0.1% of requests have

negative duration!

You could speculate

on parallel or on out of order execution, but the paradox is

resolved when you look closely on a value of duration. It's always around of -7,200,000ms. No

one will assume that execution has ended two hours before it has started. So,

what does it mean -2 hours? Well, we live in (UTC+02:00) Jerusalem time zone. We think that UTC offset crawls somehow into the result. To prove our

hypothesis we would like to change time zone on sql servers, but customer won't agree on

such an experiment.

This effect probably means that there is some hidden bug in SQL Server 2008 R2 that we cannot

reliably reproduce, but we can see that the datediff(ms,

start_timestamp, end_timestamp) may return negative value when it's known

that start_timestamp is acquired before end_timestamp.

Update: What a shame. During tunning of the original logging procedures we have changed type from datetime to datetime2, and calls from GETUTCDATE() to current_timestamp, except one place (default value in the table definition) where it remained with GETUTCDATE().

So, negative durations meant operation timeout (in our case duration is greater than 30 secs).

This time we

update csharpxom to adjust it to C# 4.5.

Additions are async modifier and

await operator.

They are used to simplify asynchronous programming.

The following example from

the msdn:

private async Task<byte[]> GetURLContentsAsync(string url)

{

var content = new MemoryStream();

var request = (HttpWebRequest)WebRequest.Create(url);

using(var response = await request.GetResponseAsync())

using(var responseStream = response.GetResponseStream())

{

await responseStream.CopyToAsync(content);

}

return content.ToArray();

}

looks like this in csharpxom:

<method name="GetURLContentsAsync" access="private" async="true">

<returns>

<type name="Task" namespace="System.Threading.Tasks">

<type-arguments>

<type name="byte" rank="1"/>

</type-arguments>

</type>

</returns>

<parameters>

<parameter name="url">

<type name="string"/>

</parameter>

</parameters>

<block>

<var name="content">

<initialize>

<new-object>

<type name="MemoryStream" namespace="System.IO"/>

</new-object>

</initialize>

</var>

<var name="request">

<initialize>

<cast>

<invoke>

<static-method-ref name="Create">

<type name="WebRequest" namespace="System.Net"/>

</static-method-ref>

<arguments>

<var-ref name="url"/>

</arguments>

</invoke>

<type name="HttpWebRequest" namespace="System.Net"/>

</cast>

</initialize>

</var>

<using>

<resource>

<var name="response">

<initialize>

<await>

<invoke>

<method-ref name="GetResponseAsync">

<var-ref name="request"/>

</method-ref>

</invoke>

</await>

</initialize>

</var>

</resource>

<using>

<resource>

<var name="responseStream">

<initialize>

<invoke>

<method-ref name="GetResponseStream">

<var-ref name="response"/>

</method-ref>

</invoke>

</initialize>

</var>

</resource>

<expression>

<await>

<invoke>

<method-ref name="CopyToAsync">

<var-ref name="responseStream"/>

</method-ref>

<arguments>

<var-ref name="content"/>

</arguments>

</invoke>

</await>

</expression>

</using>

</using>

<return>

<invoke>

<method-ref name="ToArray">

<var-ref name="content"/>

</method-ref>

</invoke>

</return>

</block>

</method>

After C++11 revision has been approved a new cycle of C++ design has begun:

N3370:

The C++ standards committee is soliciting proposals for additional library components.

Such proposals can range from small (addition of a single signature to an existing

library) to large (something bigger than any current standard library component).

At this stage it's interesting to read papers, as authors try to express ideas

rather than to formulate sentences that should go into spec as it lately was.

These are just several papers that we've found interesting:

|

N3322

|

12-0012

|

A Preliminary Proposal for a Static if

|

Walter E. Brown

|

|

N3329

|

12-0019

|

Proposal: static if declaration

|

H. Sutter, W. Bright, A. Alexandrescu

|

Those proposals argue about compile time "if statement". The feature can replace #if preprocessor

directive, a SFINAE or in some cases template specializations.

A static if declaration can appear wherever a declaration or a statement is

legal.

Authors also propose to add static if clause to a class and a function declarations

to conditionally exclude them from the scope.

Examples:

// Compile time factorial.

template <unsigned n>

struct factorial

{

static if (n <= 1)

{

enum : unsigned { value = 1 };

}

else

{

enum : unsigned { value = factorial<n - 1>::value * n };

}

};

// Declare class provided a condition is true.

class Internals if (sizeof(void*) == sizeof(int));

Paper presents strong rationale why this addition helps to build better

programs, however the questions arise about relations between static if

and concepts, static if clause and an error diagnostics.

|

N3327 |

12-0017 |

A Standard Programmatic Interface for Asynchronous Operations |

N. Gustafsson, A. Laksberg |

|

N3328 |

12-0018 |

Resumable Functions |

Niklas Gustafsson |

That's our favorite.

Authors propose an API and a language extensions to make asynchronous programs

simpler.

In fact, asynchronous function will look very mush as a regular one but with small

additions. It's similar to yield return in C# (a

construct that has been available in C# for many years and is well vetted),

and to async expression in C# 4.5. Compiler will rewrite such a

function into a state machine, thus function can suspend its execution, wait

for the data and to resume when data is available.

Example:

// read data asynchronously from an input and

write it into an output.

int cnt = 0;

do

{

cnt = await streamR.read(512, buf);

if (cnt == 0)

{

break;

}

cnt = await streamW.write(cnt, buf);

}

while(cnt > 0);

It's iteresting to see how authors will address yield return: either with

aditional keyword, or in terms of resumable functions.

|

N3340 |

12-0030 |

Rich Pointers |

D. M. Berris, M. Austern, L. Crowl |

Here authors try to justify rich type-info but mask it under the

name "rich pointers". To make things even more obscure they argue about dynamic code

generation.

If you want a rich type-info then you should talk about it and not about thousand

of other things.

We would better appealed to create a standard API to access post-compile object

model, which could be used to produce different type-infos or other source

derivatives.

This paper is our outsider. :-)

|

N3341 |

12-0031 |

Transactional Language Constructs for C++ |

M. Wong, H. Boehm, J. Gottschlich, T. Shpeisman, et al. |

Here people try to generalize (put you away from) locking, and replace it with

other word "transaction".

Seems it's not viable proposition. It's better to teach on functional style of

programming with its immutable objects.

|

N3347 |

12-0037 |

Modules in C++ (Revision 6) |

Daveed Vandevoorde |

Author argues against C style source composition with #include

directive, and propose alternative called "modules".

We think that many C++ developers would agree that C pre-processor is a legacy that

would never have existed, but for the same reason (for the legacy, and compatibility) it

should stay.

In out opinion the current proposition is just immature, at least it's not

intuitive. Or in other words there should be something to replace the C

pre-processor (and #include as its part), but we don't like this

paper from aestetic perspective.

|

N3365 |

12-0055 |

Filesystem Library Proposal (Revision 2) |

This proposal says no a word about asynchronous nature of file access, while it

should be designed around it.

|